When you sit down to have a beer, the last thing you think about is Artificial Intelligence (AI). You just want to feel that more or less bitter taste that beer provides, that refreshes you so much, even in the winter. Well, I'm sorry to tell you that this is partly thanks to a company called Carlsberg that almost 150 years ago started one of the first industrial research labs, which discovered a way to purify yeast that allowed for constant beer production. The company decided to share it freely with other brewers, and Carlsberg yeast is used in most of the world's lagers brewed today. Another invention: The PH scale, which measures the acidity (or amount of hydrogen in a solution), was discovered in these laboratories and was again given away for free and still used today. We could call this an "open source" spirit.

Carlsberg has been implementing an AI project for several years. The aim is to sensor aspects related to beer, which will allow them in addition to detecting contaminants in soil, air or water, to overcome the use of chromatography and spectrometry to detect new flavours and aromas, a process which can take hours, with each fermentation tested. It will now be significantly shortened. Carlsberg, in collaboration with several universities and Microsoft, a company that has presented an interesting study, prepared by EY, maps the process of AI adoption and studies how the main brewing companies in 15 European countries are benefiting from AI.

The reason we have cited the example of one of the four most important brewing companies in the world, it is to convey a key idea: AI is not just about technology, but about a way of seeing the world. It is necessary to create a culture around AI.

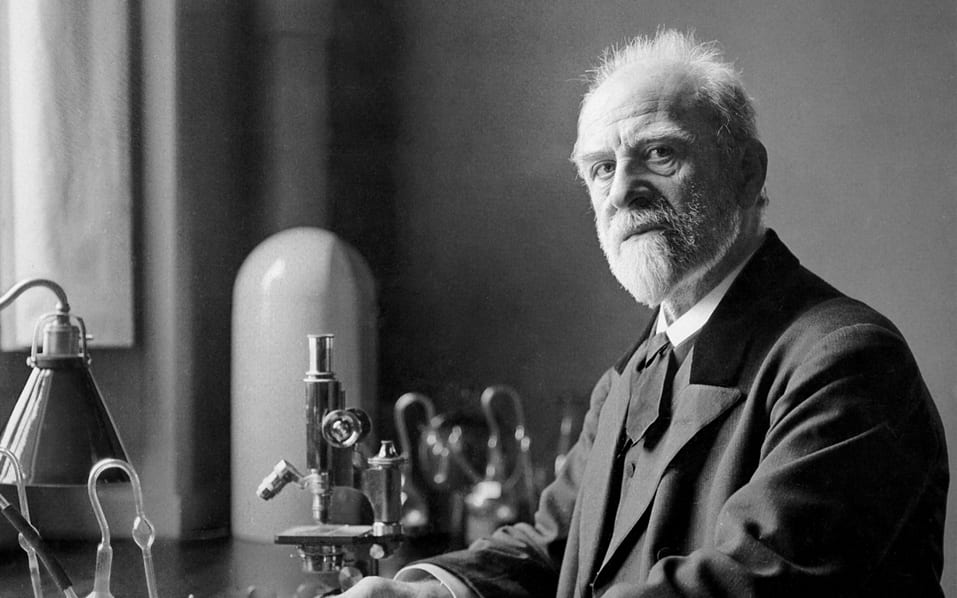

Emil Christian Hansen. Head of the Carlsberg Research Laboratory Department developed the first method of cultivating pure yeast in 1883, which revolutionised the brewing industry.

Source: www.carlsberggroup.com

"It's hard to innovate a 5,000 year old product but when you've seen every molecule in beer and every gene in barley, you realise there are still endless opportunities to create an even better beer."

Birgitte Skadhauge. Vicepresident of Carlsberg Research Group.

The Microsoft study offers us a very interesting tool to understand what we have introduced. On the one hand, it describes the importance of the products a company has, as well as the degree of competence (knowledge) in the products that the company claims to have.

Open culture

Perhaps this is the core element, because the crux of the matter is not in the data but in its treatment. With a relatively small gap between its importance (average 3.9/5) and the competence (average 3.2/5) assumed by the companies interviewed, creating an open culture is one of the capabilities where leading AI companies feel most comfortable. However, a problem in this regard is the concept of sharing data openly, when the value of the data remains largely unknown until it has been treated, processed or combined with other datasets. This problem is also likely to come from within the organisation, when sharing data between departments. In any case, a policy of transparency about ongoing projects and desired outcomes will help establish collaboration through cross-organisational projects or co-ownership between AI experts and companies.

External partnerships

One of the main problems detected is the lack of experience on the part of companies in AI projects and the shortage of talent in the market and within companies to tackle this type of problem.

In Spain, 90% of the companies interviewed consider themselves moderately to highly competent in external alliances and consider it important (3.5/5) on average, which indicates that most of them have already participated in some kind of shared project and, therefore, have acquired some experience in this field.

An example of this model of external alliances could be Pavabits, a company that emerged from the collaboration of more than 5 years between the construction company Pavasal and Cuatroochenta and which has resulted in the creation of a piece of software for the digitalisation of the reception and validation of invoices in the cloud, which from now on Pavabits will market as Invoice System.

Advanced Analytics and Data Management

There are two other essential elements that we must work on if we want to implement an AI strategy in our company: Advanced Analytics and Data Management. We have already talked about both in previous articles.

On Advanced Analytics (AA) or Business Intelligence (BI) it is important to look inside the company and train our current employees with AI skills generating a hybrid profile that will have an impact on future projects. It is certainly one of the skills considered important in relation to AI and its importance is at 4.2/5. However, there is room for improvement as the level of competences of companies is at 3.2/5 in the Spanish case, only one tenth below the European level.

The other aspect is Data Management (DM). It is essential to have optimal strategies that allow us to capture, store, structure, tag, access and govern data in order to build the basis and infrastructure to work with AI technologies. From Microsoft's report it is clear that:

The companies surveyed spend between 2 and 3 years creating the right data infrastructure for AI, and even more in the case of very ambitious projects that require further refinement of these infrastructures.

To create accurate and useful AI solutions, companies need not only a large amount of data, but also accurate data that is properly structured and labelled. Failure to do so can result in data that is simply useless, as its use could lead to undesirable or unreliable results.

We find many companies that are concerned with cleaning, structuring and migrating historical data to take advantage of it; others have opted to build new data structures from scratch to collect the right data in the future.

60% of advanced companies report using hybrid architectures on-premises and cloud-based storage, while the less advanced rely primarily on on-premises platforms.

There is one element that, while cross-cutting, is crucial in data processing: The recent implementation of GDPR in the EU has highlighted the need to govern data to know what it is used for.

In fact, the 3 most important risks associated with AI projects in Spain that are perceived as a brake are the following:

Top 3 business risks in Spain

Synthetic data

Synthetic data is part of what some have called "critical technologies" for AI 2.0, and its use. Essentially, it is about "manufacturing data" to eliminate the risk of sensitive information leakage and to address regulatory and GDPR issues. It is not that this data is "artificially" generated, but by treating it in the right way it allows us to "anonymise" or "pseudonymise" it. Although, nowadays data aggregation is preferred (as it avoids inference of the deleted data and thus prevents it from being reconstructed) at the cost that the "summaries" generated in aggregation reduce too much of the informational value of the data. However, there are more techniques that we will not go into here.

Synthetic data is generated by a machine learning model that has been previously trained on the original data. The purpose of training the model is to identify the existing activity patterns in the data, in order to be able to reproduce them. In other words, the model must be able to generate a new set of data whose statistical properties are the same as those of the original data.

What do companies use AI for?

1. Predicting.

74% of companies see prediction as a relevant use of AI, this functionality includes:

Analysing the causes of churn, identifying and proactively engaging to prevent potential churn. Sales teams also use predictive analytics, for example to identify leads with the highest likelihood of conversion.

For their part, companies that sell or operate advanced and expensive machinery use predictive maintenance to save costs by improving maintenance and reducing downtime.

2. Automatising.

It is estimated that between 20-30% of the tasks performed in companies can be automated. A growing use is the implementation of chatbots that help improve the relationship with the user and allow us to obtain information that can be fed back into the AI circuit.

3. Generating knowledge for decision-making

58% of companies see AI as a way to make better decisions. With the right data infrastructure in place, data analysis can be accelerated, trends can be spotted and processes such as product development can be sped up.

4. Personalising.

44% of companies use AI to personalise the user experience, not only at the moment of consumption (purchase) of a good or service, but also in the previous and subsequent phases. In this sense, Cuatroochenta has developed a product and service recommendation engine for customers of a hotel chain, based on their usage history and their own profile.

5. Prescribing.

From the use of suggestion and recommendation engines to help in decision making to other more advanced types of support that require a high volume of information. For example, Cuatroochenta programmes the cleaning of toilets in airports according to different variables, such as the order of arrival of planes, boarding gates, available staff, etc.

"AI will allow objects to understand, making them more useful. Of course, AI will not only benefit our relationship with objects, but also automate all kinds of processes that were previously too complex for industry or services."

Claudio F. González. Professor at the UPM. El gran sueño de China..

In short

La The transformation of organisations driven by AI is continuous. This requires seeing AI as a process, not a project. So if your company has not yet made the leap, now is the time to do so by automating small tasks. It is simple. Little by little, the level of technology and employee training will increase. Companies that are in the process are sure to continue to be motivated to continue exploring use cases, sometimes with uncertain results, but which always serve as an impetus to continue leading the advance towards the customer and the employee.